April 15, 2010 (Vol. 30, No. 8)

Advanced Solutions Help Organize and Optimize Vast Amounts of Information

As high-throughput screening technologies are producing increasingly vast quantities of data, correspondingly robust data-analysis tools are necessary to help scientists sift through it for usable information. One solution to the problem is to use bigger, more powerful computers. However, software techniques for data integration and analysis can make huge datasets more manageable for a typical desktop PC. These methods will take center stage at CHI’s upcoming “Bio-IT World” conference.

Developing better tools for the analysis of small molecule screening data will be the subject of a presentation by the Broad Institute’s Raza Shaikh, Ph.D., associate director of informatics for the chemical biology platform. Broad received a $100 million, five-year grant as part of the Molecular Libraries Probe Production Centers Network (MLPCN) to develop data-analysis tools for Pubchem data. The Molecular Libraries Program seeks to bring the tools of high-throughput screening, widely used in the pharmaceutical industry, to public-sector science.

One of the difficulties in dealing with such a volume of data is in hit calling and decision making. If an assay is tested with 300,000 compounds, thousands of positive hits will result. Of these positive hits, many will be false positives or will ultimately be biologically irrelevant. Smarter tools for sorting out these hits streamline the decision-making process.

“The motivation behind the small project that we did is to allow that knowledge search and decision making to happen quickly and in an automated fashion. Rather than manually searching each of these assays one-by-one in Pubchem, our goal is to reduce two days’ worth of work to fifteen minutes,” says Dr. Shaikh.

The program produces a real-time report of compound inhibition in known assays. The greatest challenge, adds Dr. Shaikh, is the variety and inconsistency of the source data. “When people submit data to Pubchem, there are countless variations in terminology that have to be individually addressed within the software.”

Sage Bionetworks is seeking to show how integrative genomics can be used to characterize psychiatric phenotypes in sleep disorders.

Integrative Genomics

New genomics techniques like next-generation sequencing produce overwhelmingly large datasets that present a challenge in data analysis. Some solutions are available for data storage and transfer, but analytical manipulation adds an extra computational burden. Common data-analysis techniques such as normalization, when applied to a huge dataset, output a dataset that overwhelms the memory on a typical computer, causing CPU performance to drop.

Marc Bouffard, senior bioinformatician, Montreal Heart Institute and Genome Quebec Pharmacogenomics Center, has developed a data-analysis system called CASTOR QC (comprehensive analysis and storage) that solves some of the most common problems in the analysis of genomic data: data redundancy, data formatting, and the use of flat files.

“The idea is to move away from the old-school way of doing things,” says Bouffard. “With smaller studies, people have been able to look at their data—open a file, page down. With the newer studies, that’s just not practical. You really need to move to a data format that is optimized for computer or automated processing.”

Bouffard began developing CASTOR QC because the Montreal Heart Institute wanted a faster way to analyze data from a project examining the toxicity of statins and other lipid-lowering drugs. The project included 7,000 patients with a half-million SNPs each. The conventional analysis methods tended to be memory bound, only processing properly if the file could fit into memory.

Supercomputing solutions such as cloud computing offer some relief from this bottleneck. However, many genomic datasets including SNP studies and next-generation sequencing produce datasets so large that the bandwidth limits the transfer of data into and out of the database.

“We basically started from the beginning and looked at the data to find a way to store it without losing any information in a format that is optimized for analysis,” says Bouffard. “So far the results are very good.” A side benefit of this more efficient method of analyzing data is that the datasets are much smaller—as little as 15% of the original size—which reduces the cost of data storage.

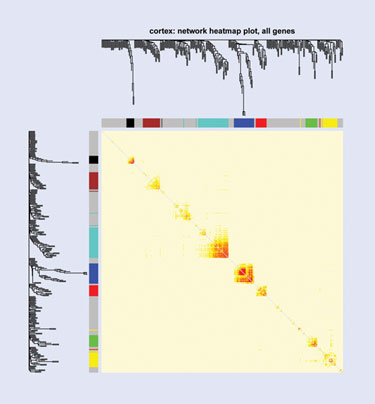

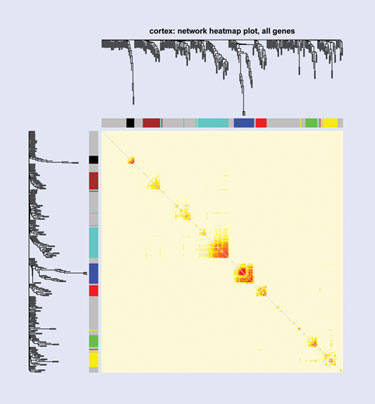

A presentation by Joshua Millstein, Ph.D., biostatistics, senior scientist, statistical genetics, Sage Bionetworks, will show how integrative genomics can be used to intensively characterize psychiatric phenotypes in sleep disorders in a genome-wide microarray expression study, with the ultimate goal of identifying targets and biomarkers for therapy. Dr. Millstein used a line of inbred mice to identify genes that associate with a sleep trait that is also associated with depression.

“We’re also placing these genes in the context of cell-wide networks of gene expression and identifying regions of these networks that are associated with clinical traits of interest. In this way we can place these genes in the context of global expression and determine whether they’re likely to cause cell-wide changes in expression or influence important genes,” says Dr. Millstein.

To perform the data analysis, the team built co-expression networks using soft thresholds to determine relationships between genes, and to identify co-expressed modules—groups of genes that tend to be expressed as a group between individuals. Bayesian modeling techniques incorporated genotype information. They also used a causal driver method, a network-based approach to identify nodes in the network that influence other nodes.

Collecting the data involved a labor-intensive quality-control methodology. The EEG and EMG sleep data was collected over a period of 48 hours for each mouse, but the experiment spanned the course of an entire year. This meant a scientist had to check each dataset to ensure that it mapped correctly, and follow up on any unusual patterns. RNA quality was crucial, because RNA degrades rapidly. “The standard expression in statistics is garbage in, garbage out. It’s very hard to generate a high-integrity dataset with so many components. Every component needs to be high quality for the end result to be reliable,” says Dr. Millstein.

3-D simulation of brain tumor growth using an agent-based model. Heterogeneous cancer cel clones and phenotypes are shown.

Agent-Based Modeling

Agent-based modeling is an approach that can be used to develop hypotheses and make predictions about a biological system. Like many of the tools of systems biology, it benefits from a multiscale approach. However, this creates an extremely dense dataset that can be unwieldy and difficult to integrate into a functional workflow.

Thomas Deisboeck, M.D., associate professor, radiology, Massachusetts General Hospital, Harvard Medical School, will present his work on a hybrid discrete and continuum agent-based cancer model. It simulates each cancer cell, equipped with cell-signaling pathways, and the cells interact on three-dimensional lattices that resemble microenvironments in tissue. This cross-scale technique allows validation of biomarkers and discovery of novel molecular targets.

Dr. Deisboeck has been able to observe in silico patterns that emerge in multicellular populations over time, in brain and lung cancer, which can then be compared to histology samples and patient imaging data. Using a patient’s MRI as a starting point, Dr. Deisboeck simulates tumor growth, and has begun to predict patient-specific cancer progression. “We were able to predict, in a patient case study, tumor recurrence earlier than it was visible on MRI,” says Dr. Deisboeck, referring to a retrospective case study of an individual with a brain tumor.

Normally, the resolution limits of conventional imaging technology would not allow physicians to study the cancer on a single-cell level. Dr. Deisboeck’s agent-based modeling uses simulation techniques to push the resolution beyond the natural limits of the instrument, providing a personalized computational model of an individual cancer. Another limitation exists on the computational side of the experiment, where extremely dense datasets strain the capacity of the systems.

While still at a nascent stage, Dr. Deisboeck notes that the use of multiscale, multiresolution modeling will allow the scientists to simulate selectively at various levels of granularity, choosing higher resolution for areas of interest such as the margins of the tumor where growth and invasion are more likely to occur and lower resolution for areas that are less likely to change quickly such as the center of the tumor. This makes the dataset more manageable and less computationally costly in an effort to simulate progression across multiple scales up to clinically relevant tumor sizes.

Knowledge Management

The presentation by Yuri Nikolsky, Ph.D., CEO of GeneGo, will focus on systems for knowledge management in large organizations. Pharmaceutical companies often have disparate databases as a result of acquiring many smaller companies, having many locations, or having many databases. Scientists may all be doing the same thing, but using slightly different words and organizational schemes.

Bringing all of that data together requires the construction of a knowledge base and a common ontology. GeneGo specializes in creating these systems for an organization using an underlying database called Metabase, which is its infrastructure. GeneGo manually exchanges the contents and classifies data into ontologies, which function like file folders in the system. The company uses a controlled vocabulary to standardize the data from different sources and to make data and metadata easier to find. This is done by creating synonym libraries, which can contain as many as three million entries.

“GeneGo is working on a lot of ontology projects for pharma where we help them with controlled vocabulary. For example, if someone calls something a Granny Smith and another one a Golden Delicious, we call them apples and add them to the database,” says Julie Bryant, vp, business development and sales. All of this structure overlays the company’s original infrastructure—rather than replacing it completely—helping to preserve the functionality that was there originally.

In addition to remodeling a company’s data infrastructure, the Metabase knowledge-management system is useful for creating open-access data repositories for sharing between institutions. Some of GeneGo’s current customer projects are open in nature. “Several pharmaceutical companies have come to us and said, ‘We want to put things in an open forum. So, we want the ontologies you’ve built to be industry standard and put them out there in the public domain,’” says Bryant.

Newer technologies for data integration and analysis emphasize sleek solutions that fit into an existing workflow, rather than replace it. Significant advances include methods for removing unnecessary information from large datasets to make them more compact and tools that make it easier to obtain and share information. Advanced data-integration and analysis tools make it possible to leverage the full power of a high-content screen or a database and aid in hypothesis development, decision-making, and prediction.