July 1, 2010 (Vol. 30, No. 13)

Emerging Enhancements Address Limitations of This Essential Laboratory Technique

Since the origin of PCR in 1983, there have been many enhancements and adaptations. Current trends, recently cited in a report by HTStec (qPCR Assay Trends, 2008), indicate a focus on faster reagents, shorter run times, and new applications such as expression profiling and multimarker diagnostics.

However, there are still some limitations including high instrument and consumable costs as well as data analysis. Automation of qPCR is expected to improve assay quality and throughput. The recent “Developments in Real-Time PCR Research and Molecular Diagnostics” conference shed some light on advances that will address current challenges.

Researchers at Qiagen-DxS developed a novel, real-time Taq extension assay to assess the strength of the amplification refractory mutation system (ARMS) switch. ARMS is allele-specific PCR, where the primer acts like a switch for PCR; when it matches, PCR occurs. It is used to detect gene mutations and SNPs. Jane Theaker, R&D team leader, noted that existing Taq polymerase activity assays involve using radioactivity, are laborious, and are not in real-time.

“We didn’t have an easy way of assessing the strength of the ARMS switch.” She added that it’s difficult to dissect out ARMS extension from other whole PCR effects such as total amplification efficiency, presence of primer-dimers, and side products. The new approach also provides the ability to vary parameters not practical in PCR. It also saves time and costs by using specific primers, she said.

Traditional PCR utilizes primers specific to a sequence; in ARMS, primers are modified at the 3´ end. The modification is designed to make them allele specific. The ARMS switch acts like a switch for PCR by making that last base specific for the allele under investigation.

The assay principle only generates extension products; no PCR cycling occurs. There are 4,096 separate data points (64 primer combinations x 64 template combinations). Any combinations where all three bases are mismatched because they would be highly unlikely to misprime are excluded.

Theaker claimed that the assay provides an actual measurement via mathematical modeling. “We can put a finger on how the Taq extension works, rather than basing it on a threshold cycle you might get with real-time PCR. This is useful in one PCR application but may not be applicable across all PCR situations where you have different templates. We’re just looking at the efficiency of the 3´ end of the primer and that’s what gives us specificity and will be applicable across many different templates.”

She added that they haven’t proved that this is a better way of assessing what will occur in PCR, but that “some of the initial data leads us to believe that we can use extension data to predict what’s going on in PCR.”

Applications for the new assay include when allele-specific PCR is required—such as for genotyping, designing companion diagnostics, and for developing new diagnostic assays. However, Theaker said it won’t be launched as a product, but rather the information will be publicly available after it’s published.

Ultrafast Techniques

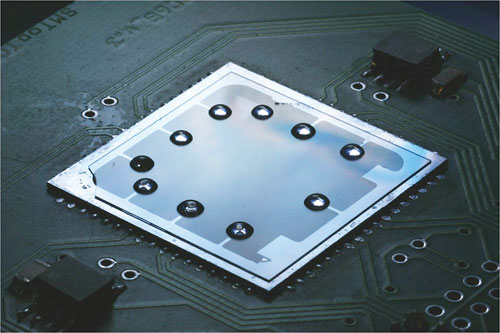

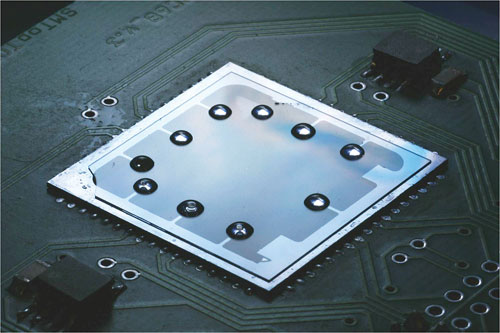

A micro real-time PCR platform consisting of a microscope cover slip on top of a micromachined silicon chip integrated with a heater and temperature sensor has been developed by a team at KIST-Europe. Measuring about 5 x 10 x 4 cm, Pavel Neuzil, Ph.D., team leader, clinical diagnostics, and co-developer, said the impetus behind making the system was to develop something to detect H5N1 virus in undeveloped countries.

“This allows the detection of any gene in about 15 minutes versus 30 minutes to 2 hours in a normal PCR platform,” he remarked, adding that the hardware is about 10 to 20 times cheaper, and the consumables almost 100 times cheaper. He predicts that manufacturing costs will be close to $150 versus $5,000 to up to $20,000.

The DNA sample is placed on the microscope cover slip and encapsulated with mineral oil, creating a virtual reaction chamber. Dr. Neuzil said the platform can be used for any sample, depending on the dimers added.

“Basically, you can use any sample and look for HIV, paternity testing, and such.” The microscope cover slip is disposed of once the reaction is completed, avoiding sample cross-contamination, and a new one may be added for the next run. The labeling system used is SYBR Green I. The optical system is derived from those used in DVD players.

Although the system can’t compete with big machines used in labs or hospitals, Dr. Neuzil noted this micro platform’s potential applications include point-of-care, homeland security (Anthrax), education, and field applications. He is hoping it will soon be manufactured.

A micro real-time PCR platform consisting of a microscope cover slip on top of a micromachined silicon chip has been developed by a team at KIST-Europe. Ten virtual reaction chambers (VRC) consisting of 0.6 µL of oil covering 0.2 µL of PCR mixture are placed on the surface of the glass. Each VRC represents a single PCR spot.

Maximizing HRM Assay Performance

“HRM—high-resolution melting—is an emerging technology that’s a natural outgrowth of the PCR arena,” stated David Schuster, Ph.D., director of R&D at Quanta Biosciences. The company is developing reagents to enhance this assay for genetic variation analysis and gene quantification. Dr. Schuster added that the key to transition from qPCR to HRM is the dyes.

“In theory, you want to use a dye that’s occupying every site, and as you increase the temperature and DNA is dissociating more and more, the dye no longer binds because it’s single stranded. So you have a direct decrease in fluorescence as the DNA is melting. The promise of HRM is to be able to detect subtle differences in sequence variation. So, if you have a well-behaved system, you can readily resolve even silent base changes (transversions)—like C-G SNPs, you can actually pick those up.”

The Accu-Melt HRM SuperMix reagent has been engineered to maximize the melting differences seen from different sequence variants. It should be able to be used on any HRM assay, but the company is currently focused on SNP assays. Other potential applications include use for low-resolution assays to identify gene knockouts. Traditionally this would be done via PCR with three primers. However, if done via HRM, it would enable the ability to distinguish subtle sequence variations and eliminate the gel.

The firm said the new reagent should provide considerable time-savings due to much less required optimization. Dr. Schuster added that it should work for methylation analysis as well, although it hasn’t been validated yet. “We’ll be looking to see what the challenges are with it and provide unique solutions as they arise. Since there are so many diverse applications of HRM, I don’t think there’s going to be one formulation for universal application. That diversity brings a lot of complexity.”

Although many instrument and reagent companies are starting to support HRM, Dr. Schuster said it still has some big challenges. One is finer temperature control. The difference in melting temperature between an A-T SNP may be 0.2º, but most machines only detect down to 0.5º. There are algorithms to try and normalize for this in a process called temperature shifting, where normalization is attempted against the x-axis. However, he cautioned, there is a need for better methods of standardizing algorithms for data.

HT Expression Profiling

The bottlenecks and challenges of qPCR no longer reside in the technology, but rather what occurs up- and downstream, explained Mikael Kubista, Ph.D., founder of TATAA Biocenter. “The upstream, or preanalytical phase, is a major challenge, especially for gene-expression analysis,” he said.

This is due to several factors. Most sample-collection tubes contain EDTA, which removes magnesium, and greatly affects expression—some genes are 20-fold up-regulated. Tissue samples often have degraded mRNA due to nucleases released upon collection. “I read somewhere that about 85 percent of samples being analyzed from tissue are formalin-fixed embedded tissues,” said Dr. Kubista. “This material is often quite damaged and questionable in terms of expression-profiling analysis.”

Since biological material is heterogeneous, it’s difficult to measure a particular disease signature above the variable background. This has lead to HT single cell expression profiling. HT is needed because expression occurs in bursts. “If you take a snapshot of the RNA levels in an individual cell, they vary. In order to obtain statistics, you have to measure at least 50 cells to get a signature. We know the most powerful signatures are not only gene-expression profiles, but also correlation between genes,” Dr. Kubista explained.

This correlation is key to the downstream process, or data analysis and mining. Although most researchers analyze one gene at a time, this is not very powerful due to high background noise. “However, if you monitor five to ten markers, you can take advantage of a correlation of gene expression. This will be important in the future for not only diagnostics, but to prognostics and prediction of response to therapy.”

Dr. Kubista also said there will be a trend toward combining information from more measurements to include all the markers involved; this is known as multimodal analysis. “All the tools are there, but nobody is putting everything together. This has been recognized as an important field in Europe, but what is missing is a simplified work flow.”

He added that there is still too much user intervention introducing large errors and that traceability is not as good as it used to be. However, he says more people are making an experimental design first and performing replicates at all steps of the experimental procedure. “We are learning how to optimize the experiment by finding out where the variability comes from and then discovering how many subjects are required to measure what is needed, like two-fold expression.”

This is called power-testing and is key, said Dr. Kubista, because there are many studies with either too few (unable to detect differences) or too many (wasting money) subjects. Since this approach requires HT, Dr. Kubista says the field will be moving toward more outsourcing and researchers will be more focused on planning experiments and collecting samples.

Rapid Quantification of DNA Libraries

In order to address some of the current challenges of qPCR, Agilent Technologies has launched a mutant Taq DNA polymerase. “There is a big drive for high throughput,” explained Raza Ahmed, Ph.D., product specialist. “The two ways this is done is by increasing the number of reactions per plate and by decreasing the assay run time. Unfortunately, as you shorten the run time, you compensate for a loss of sensitivity and performance.”

The Brilliant III UltraFast qPCR MasterMix contains a mutant Taq polymerase that can accommodate these challenges and tolerate any inhibitors in the sample (it has been tested in whole blood and various cells). “This provides a 60 percent time savings, and we now have the fastest enzyme on the market, enabling a qPCR reaction in 30 minutes.”

Rather than using an antibody-based enzyme, Dr. Ahmed explained that Agilent modified a chemical reagent to minimize background noise from nonspecific amplification. This is because the antibody has to be specific to activate Taq and there is potential for it to fall off. “This chemical reagent is much faster and you can go as short as three minutes on preamplification with 90 percent polymerase activity.”

The overall advantages include ability to perform fast qPCR without losing sensitivity, amplifying targets even in the presence of PCR inhibitors, and minimizing nonspecific amplification via a modified, chemical hot start.

Current applications include pathogen detection, gene expression, and genotyping. A new application for single-cell analysis will be launched soon, and Dr. Ahmed said they are considering splice variants and methylation.

The company also launched a library quantification kit for next-generation sequencing that includes the Brilliant III enzyme. Primers specifically designed for the Illumina genome sequence analyzer are key. “This is important because the current issue with sequencing is that researchers are unable to obtain high-quality data from the DNA sequences because there are issues having the correct amount of DNA to put on the sequencers. This is the big bottleneck at the moment.” The Brilliant III reportedly enables fast sequencing compared to current methods such as fluorescent dyes.

Agilent Technologies’ Brilliant III UltraFast qPCR MasterMix features a newly engineered Taq that reduces cycling time and improves sensitivity, the company reports. The firm says the Brilliant III reagents’ faster extension rate along with hot-start technology minimize nonspecific amplification.